The Productivity Claims Meet Reality

Garry Tan, CEO of Y Combinator, has open-sourced his Claude Code setup that allegedly enables him to write 10,000-20,000 lines of production code per day while running YC full-time. His gstack system transforms Claude Code into a virtual engineering team with 13 specialist roles, from architecture planning to automated testing.

Yet on Reddit, a developer poses a question that cuts through the hype: "How is anyone keeping up with reviewing the flood of PRs created by claude code?" They report that while the code quality is good, the sheer volume has made their review process unmanageable—even after breaking tasks into smaller chunks.

This disconnect reveals the real bottleneck in AI-assisted development: it's not about generating code, but integrating it into human workflows.

Anthropic's Vision: Beyond Code Generation

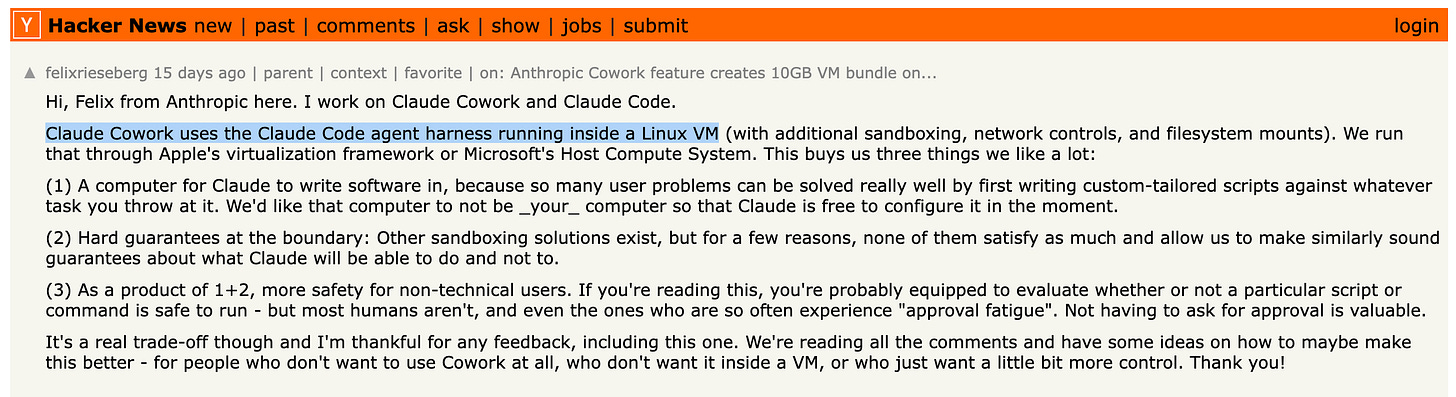

Meanwhile, Anthropic is pushing Claude's capabilities beyond pure code generation. Felix Rieseberg from Anthropic reveals that Claude Cowork—a VM-based tool giving Claude its own computer environment—emerged from an unexpected observation: users were applying Claude Code for general knowledge work, not just programming.

Built in just 10 days using Claude Code to essentially write itself, the tool represents Anthropic's belief that AI should have dedicated computing environments rather than requiring constant human approval. As Rieseberg notes, the shift from "better chat" to trusted task execution represents the real frontier.

The Integration Challenge Nobody Anticipated

Tan's gstack includes sophisticated features like:

- Parallel execution across multiple Claude sessions

- Automatic 100% test coverage generation

- Design audits with "AI slop detection"

- Real browser testing through visual AI capabilities

He reports writing over 600,000 lines of code in 60 days—output that "used to require a team of twenty."

But this extreme productivity creates its own problems. The Reddit developer's experience suggests that while AI can generate good code at unprecedented speeds, human review capacity hasn't scaled accordingly. Breaking work into smaller, more reviewable chunks only multiplies the number of PRs to manage.

What This Means for Development Teams

The clash between Tan's productivity claims and ground-level developer struggles highlights a critical transition point. As our recent coverage of developers becoming "managers of agents" predicted, the paradigm is shifting—but perhaps not smoothly.

Anthropic's push toward giving AI its own computing environment through Claude Cowork suggests one path forward: rather than generating code for human review, AI could handle more complete workflows independently. The skills feature in Claude Cowork, which enables reusable markdown-based workflows, hints at this future.

Yet the fundamental question remains: if a single person can generate 20,000 lines per day but teams can't review it fast enough, have we simply moved the bottleneck? The real challenge may not be making AI more productive, but rethinking how humans and AI collaborate on software development at scale.