The Implementation Bottleneck

The AI industry's rapid advancement continues to outpace its practical implementation infrastructure, as evidenced by a striking range of user challenges emerging across different segments of the ecosystem. From academic researchers hitting unexpected optimization barriers to everyday users losing assets on commercial platforms, the gap between AI's theoretical capabilities and real-world usability has never been more apparent.

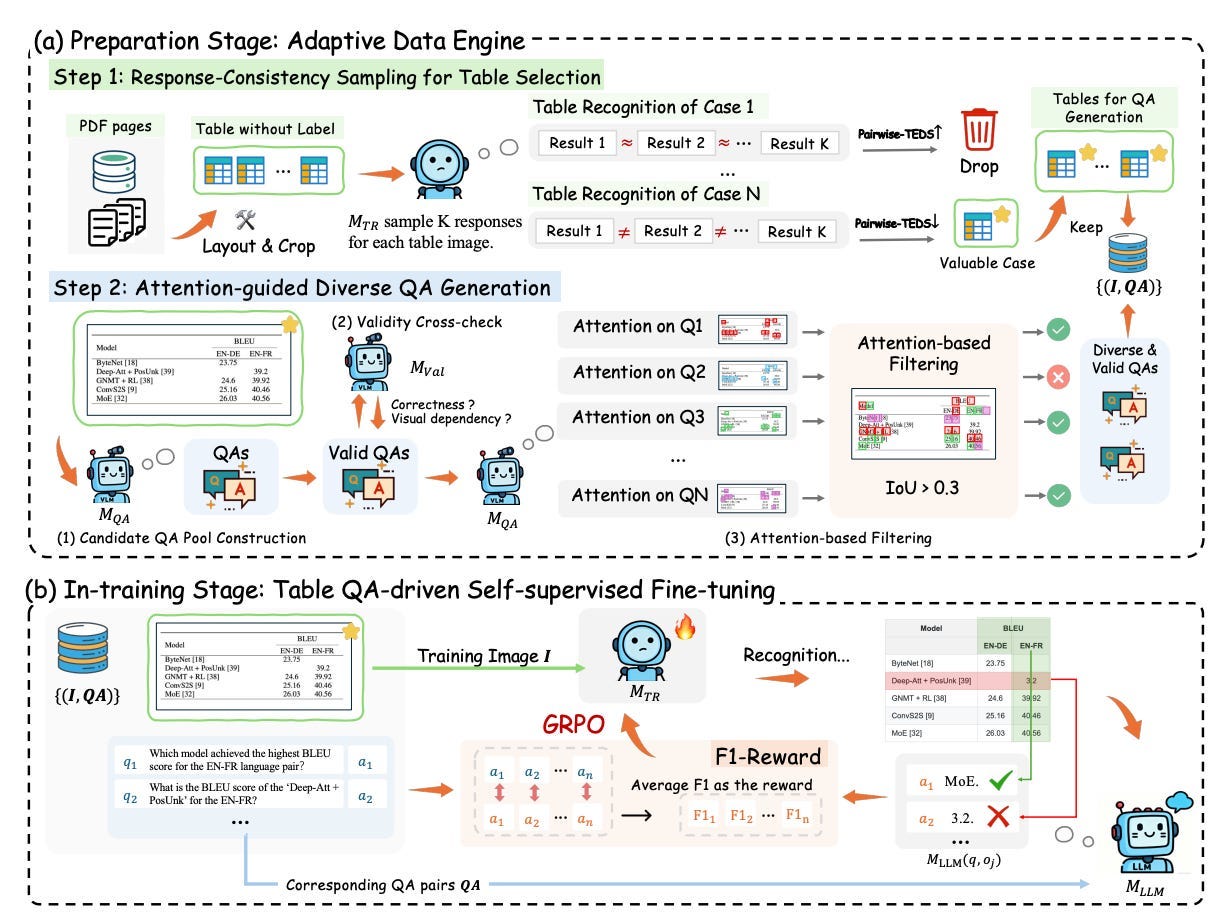

According to Towards AI, researcher Florian June's work on teaching 3B parameter models to parse tables reveals a crucial insight: "the real bottleneck isn't in the model" itself. This finding challenges the prevailing assumption that model architecture improvements alone will solve practical AI challenges. Instead, implementation details—data preprocessing, output formatting, and integration pipelines—emerge as the primary obstacles to effective deployment.

Professional Developers Face Basic Integration Hurdles

The implementation challenges extend beyond research labs into professional development environments. On r/ClaudeAI, developers working on production MVPs are encountering fundamental security concerns when integrating AI coding assistants. One developer expressed discomfort with allowing Claude to access .env files and secrets, highlighting the absence of established best practices for secure AI-assisted development workflows.

This represents a significant shift from our previous coverage of enterprise AI agents promising millions in value. While corporations deploy massive GPU installations and secure sandboxes, individual developers lack basic tooling for safely incorporating AI assistants into sensitive projects.

Platform Reliability Issues Surface

Meanwhile, creative professionals using cloud-based AI platforms face their own set of challenges. Users of TensorArt reported sudden disappearance of their LORA models, with one r/StableDiffusion user asking, "Why did all of my LORAS disappear on tensorart?" This incident underscores the risks of relying on centralized platforms for AI asset management—a concern that contrasts sharply with NVIDIA's push for enterprise-grade infrastructure we covered in recent editorials.

The platform reliability issues extend to workflow implementation, where users attempting to recreate specialized workflows using ComfyUI's apps feature encounter technical barriers that weren't apparent in promotional materials.

The Local Deployment Alternative

In response to these centralized platform challenges, a growing segment of users seeks local, privacy-focused alternatives. On r/LocalLLaMA, creative writers are searching for voice recognition models that can run entirely on local hardware for note-taking and idea capture. One user specifically requested models that could "format my ramblings and put that in something like Obsidian," emphasizing the desire for integrated, privacy-preserving workflows.

This trend toward local deployment represents a countermovement to the cloud-first approach dominating enterprise AI strategies, revealing a bifurcation in the market between corporate mega-deployments and individual users prioritizing control and privacy.

The Trajectory: Fragmentation Accelerates

Unlike the unified vision of AI advancement promoted at events like GTC 2026, the reality on the ground shows increasing fragmentation. Each user segment—researchers, professional developers, creative professionals, and privacy-conscious individuals—faces distinct implementation challenges that generic AI infrastructure solutions fail to address.

This fragmentation suggests that the next phase of AI development won't be about breakthrough models or massive compute deployments, but rather about solving the mundane yet critical problems of integration, reliability, and usability that currently prevent AI tools from delivering on their promise. The gap between AI's theoretical capabilities and practical implementation continues to widen, demanding a new focus on the unglamorous but essential work of making AI actually work for its users.